Peer Reviewed

On the same page? Experts are mostly, but not always aligned about disinformation in times of generative AI

Article Metrics

0

CrossRef Citations

PDF Downloads

Page Views

We conducted an expert survey of almost a hundred academics, fact checkers, and journalists who actively work towards mitigating disinformation and providing policy advice in the European context to examine whether they share views on generative artificial intelligence’s (AI) role in disinformation. Findings show that fact checkers feel more confident in tackling AI-generated disinformation than academics or journalists, though experts broadly agree on the risks it poses for democracy and journalism. Regarding the attribution of responsibility to combat AI-generated disinformation, fact checkers place more onus on online platforms, while academics assign greater responsibility to news users.

Research Questions

- How competent do disinformation experts perceive themselves to be in dealing with AI-generated disinformation?

- How do disinformation experts evaluate the risks posed by AI-generated disinformation?

- To whom do disinformation experts attribute responsibilities in preventing and debunking AI-generated disinformation?

- To what extent do responses differ across expert groups (academics, fact checkers, journalists)?

Research note summary

- We conducted an online survey (N = 92) amongst three expert groups who are considered key external stakeholders in the EU’s efforts to address the risks of generative AI (Griffin, 2025) and that share the goal of mitigating disinformation: academics (n = 47), fact checkers (n = 29), and journalists (n = 16). We accounted for uneven and small group sizes through conservative data analysis and robustness checks. Participants were sampled through the European Digital Media Observatory (EDMO), a hub co-founded by the European Commission, which consults them regarding disinformation policies.

- We use the term disinformation (rather than misinformation), which is preferred by policymakers, given that emphasizing intent and harm provides a stronger basis for policy intervention (see Bleyer-Simon & Reviglio, 2024). Moreover, using generative AI to craft false narratives involves a conscious, intentional action, making disinformation the appropriate term for our focus.

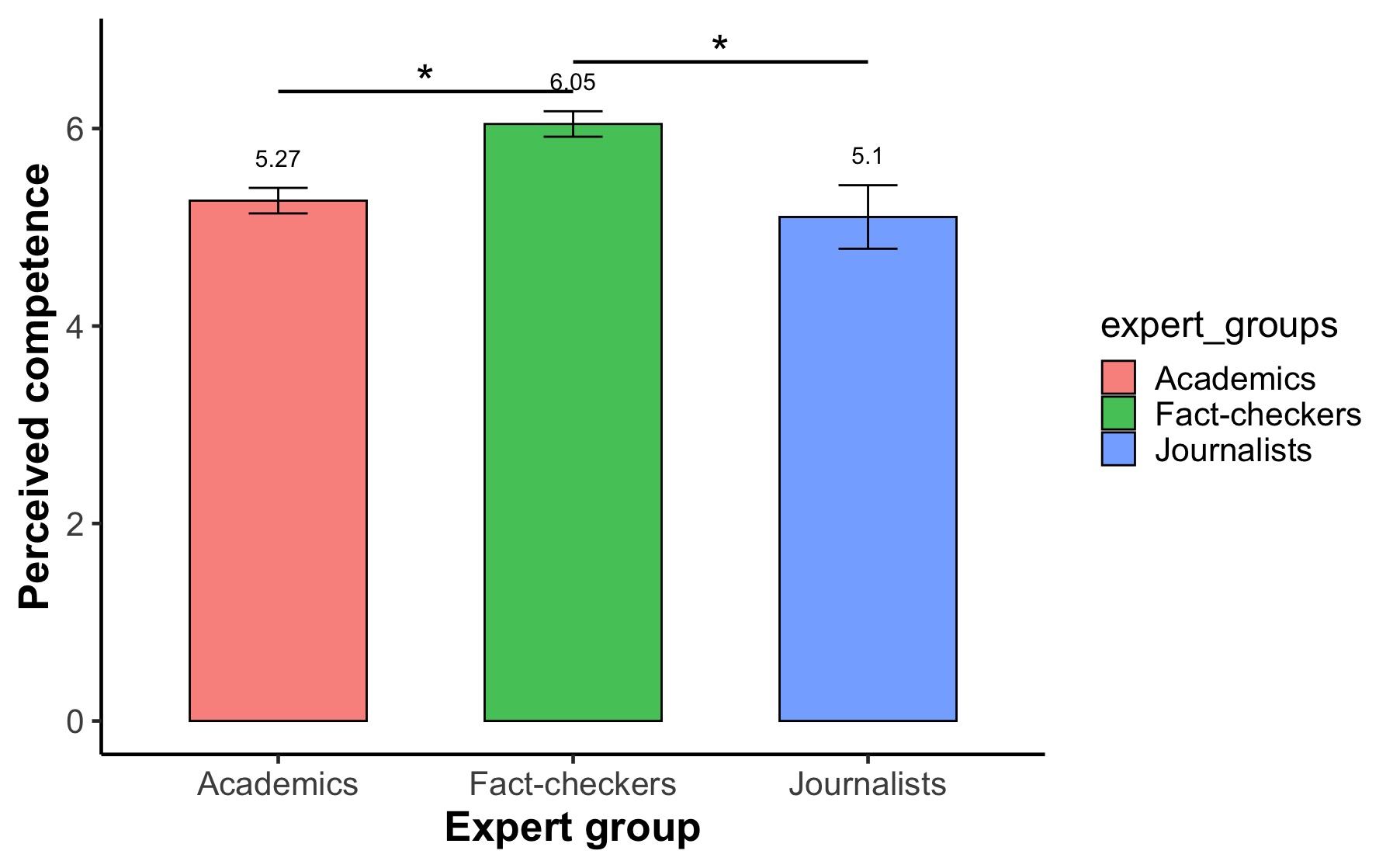

- While, on average, the surveyed experts perceive themselves as competent in doing their work in the context of AI, fact checkers are significantly more confident in their abilities compared to both academics and journalists.

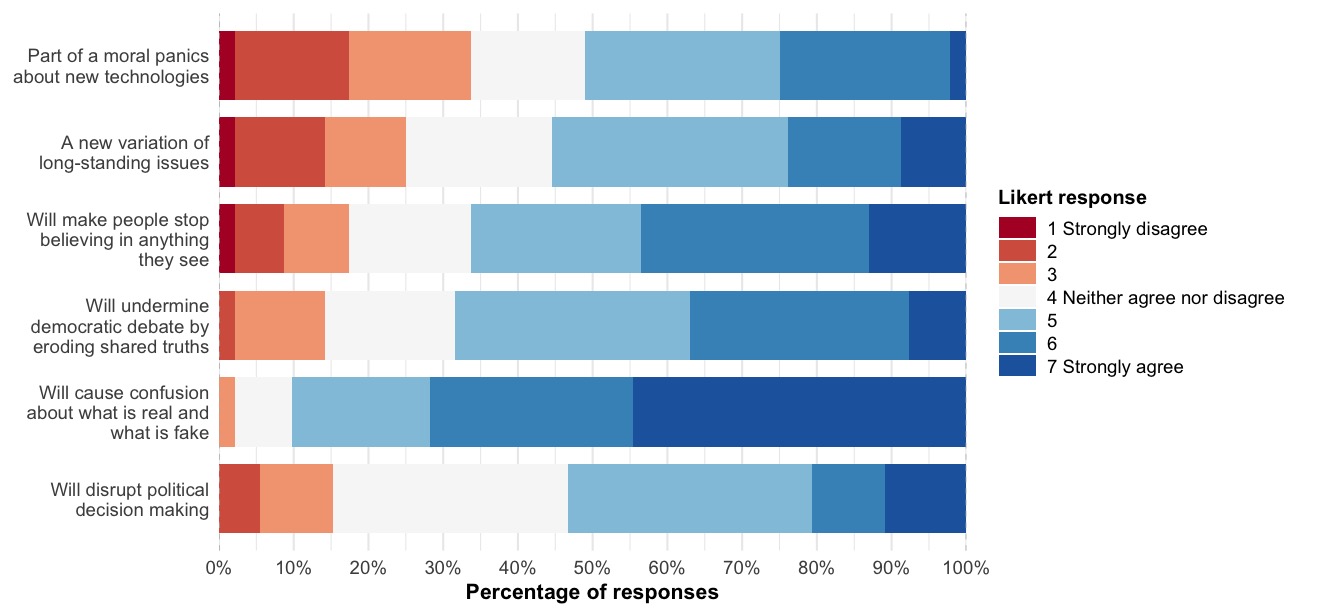

- Experts are on the same page regarding the risks they associate with AI-generated disinformation and show strong overall agreement that it may cause confusion about what is real and what is fake. They also mostly agree that generative AI is part of common panics that often surround new technologies.

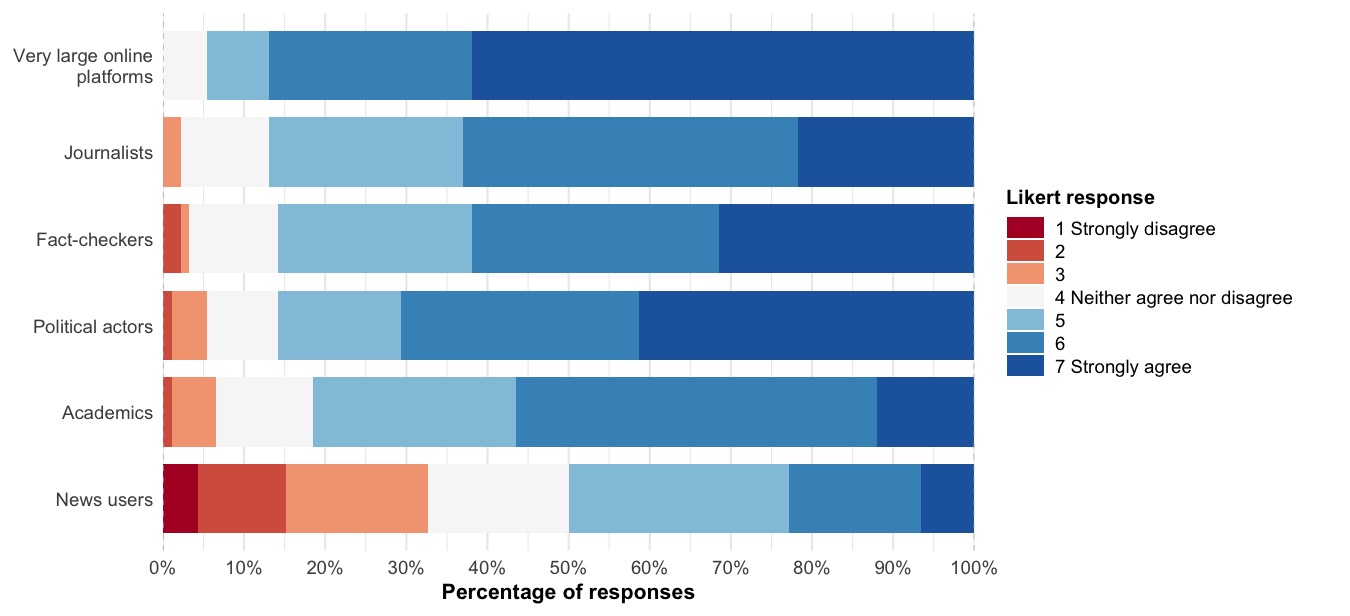

- Perceptions diverge when it comes to assigning responsibility in mitigating AI-generated disinformation. Fact checkers see online platforms as significantly more responsible actors, while academics place more responsibility on news users compared to the other groups. Fact checkers also put significantly more onus on themselves.

Implications

In an era when generative AI heightens concerns over disinformation, such as deepfakes and synthetic images (Adami, 2024), policymakers face pressure to design effective mitigation strategies (Griffin, 2025; Whyte, 2020). To inform evidence-based responses, they often rely on expert groups engaged in countering disinformation (Chystoforova & Reviglio, 2025). These experts come from diverse professional backgrounds, raising questions about whether they share a common understanding of AI’s impact. While consulting varied viewpoints is essential to tackle the issue from various angles, significant differences in assessments may lead to inconsistent messaging, and political actors may dismiss expert input as divided and therefore unreliable.

Previous research has examined how academics, fact checkers, and journalists assess AI-driven disinformation, though rarely in direct comparison (Kruger et al., 2024). Academics often see themselves as neutral information providers and emphasize platform design (Altay et al., 2023; Chystoforova & Reviglio, 2025), balancing warnings about deepfake threats with cautions against exaggeration (Chesney & Citron, 2019; Simon et al., 2023). Similarly, fact checkers tend not to overstate the dangers of AI-generated disinformation and see it as just one of many forms of disinformation they encounter (Weikmann & Lecheler, 2023). Given their hands-on approach, they often find new and creative ways to tackle this growing task (van Huijstee et al., 2021). Journalists, on the other hand, appear more alarmist, which may come from insufficient experience in recognizing AI-generated content and a tendency to take a pessimistic approach when reporting about AI (Wahl-Jorgensen & Carlson, 2021). Overall, these findings indicate that diverse professional backgrounds may lead to distinct perspectives, which need to be understood to interpret and implement experts’ policy recommendations adequately. Our study addresses this gap by directly comparing stakeholder perceptions across three dimensions: (1) self-perceived competence in dealing with disinformation in times of generative AI, (2) perceived risks posed by AI-generated disinformation, and (3) the attribution of responsibility in mitigating it.

Our findings indicate that, overall, the surveyed experts feel competent in addressing disinformation in the era of generative AI, with fact checkers standing out as the most confident. This finding is unsurprising given their comparatively greater familiarity with detection tools (Weikmann & Lecheler, 2023). Nonetheless, the significant gap between fact checkers and journalists is noteworthy and consistent with prior research (Gregory, 2021). Experts mostly agree on perceived risks. Importantly, two perspectives coexist: AI-generated disinformation is viewed both as part of long-standing challenges tied to emerging technologies (Simon et al., 2023) and as a potential source of confusion or a threat to democratic debate (Kruger et al., 2024). This suggests that experts do not regard these positions as mutually exclusive. We also found that journalists are significantly more likely to agree that amid AI seeing does no longer mean believing. This aligns with the tone of many news headlines about deepfakes and the generally alarmist stance the profession tends to adopt (Gosse & Burkell, 2020)—even among journalists with disinformation expertise. When considering how this translates into EU-policy frameworks, it seems like they overall rather adopt a pragmatic stance, emphasizing the importance of verification, monitoring, and research. Across experts, there is a strong consensus that social media platforms bear responsibility for mitigating the potential impact of AI-driven disinformation, corroborating previous findings (e.g., Altay et al., 2023). In line with the growing emphasis on platform responsibility, the Digital Services Act (DSA) has risen prominently on policymakers’ agendas in recent years. Interestingly, academics assign significantly more responsibility in countering AI-generated disinformation to news users than fact checkers do, reflecting a common scholarly emphasis on media literacy as a means of countering disinformation (Bulger & Davison, 2018). However, current EU policy frameworks address media literacy rather indirectly, for instance, through commitments made by platforms to “empower users” in the Code of Conduct on Disinformation. This finding also emerges at a time when researchers are increasingly examining the effectiveness of community notes, which Meta designated in January 2025 as its exclusive method of moderating disinformation. Lastly, fact checkers place greater responsibility on themselves, a stance that aligns with both their perceived competence and their frequent role as first responders in identifying and addressing false information (Graves & Mantzarlis, 2020).

These findings are relevant for both policymakers consulting disinformation experts and academics studying the impact of generative AI. For policymakers it is important to consider the background of the experts they engage with, as differences in expertise can subtly influence perspectives regarding mitigation responsibilities. In turn, they may propose different solutions, such as more consumer-driven in the case of academics, while fact checkers are favoring technology-driven social interventions. At the same time, experts overall agree on their risk assessments and are generally confident in their preparedness to address AI-driven disinformation, further legitimizing their role. For researchers, the findings suggest several avenues for further investigation. One is to explore differences in competence between regular journalists who do not specialize in disinformation and fact checkers, which may reveal a greater divide between these closely related expert groups. Moreover, future research could check whether there is also a divide in actual competence. Even though their responses are grounded in professional knowledge and experience, our study only captures experts’ self-perceptions. Another is to continue tracking expert perceptions over time, particularly given their shared view that AI-generated disinformation is a serious concern likely to cause increasing confusion in the future. While seen as part of a long-standing challenge, it is also seen as a pressing one, and expert voices may grow even more cautionary.

A noteworthy limitation of our study is the uneven and sometimes small group sizes, which we address through conservative analytical approaches and robustness checks (see Appendix A). In addition, we acknowledge that our study is specific to the EU expert ecosystem, which may not reflect conditions in the Global South (Vinhas & Bastos, 2025) or in more authoritarian contexts, where experts may be more hesitant about the role of the state in regulating disinformation (Tully et al., 2022). Moreover, further research is needed to examine how stakeholders’ preferences relate to actual policy, as assessing whether experts’ differing responsibility assignments translate into policy outcomes lies beyond the scope of this study. Interviews with policymakers, for instance, could clarify which inputs are taken into account and whether debates are shaped by more by alarmist narratives or more nuanced perspectives. Overall, we conclude that although expert groups may at times offer conflicting input, they share common ground on the roots and nature of disinformation (see also Altay et al., 2023; Kruger et al., 2024). Differences in proposed actions are best understood as complementary, reflecting the inherently shared responsibility of multiple actors (Young, 2006).

Findings

Finding 1: While overall confidence is high, fact checkers feel more competent to deal with AI-generated disinformation compared to academics and journalists.

Overall, the experts who filled out our online survey—academics (n = 47), fact checkers (n = 29), and journalists (n = 16)—reported a high average level of confidence when it comes to doing their work on AI-generated disinformation, signified by a high mean value (M = 5.49, SD = .98) measured on a scale from 1 to 7. This average score is higher than what had been observed in citizen samples using a similar measurement scale (e.g., Hopp, 2021; Weikmann et al., 2025). To explore differences between the expert groups in our sample, we conducted a Welch’s ANOVA, which revealed a statistically significant difference, F(2, 36.20) = 10.23, p < .001. We followed up with a Games-Howell post-hoc test. As can be seen in Figure 1, fact checkers (M = 6.05, SD = 0.69) felt significantly more competent than both academics (M = 5.27, SD = 0.89, p < .001) and journalists (M = 5.10, SD = 1.29, p = .034). No significant difference was found between academics and journalists (p = 1.00).

Finding 2: Experts are largely aligned regarding how they perceive the risks posed by AI-generated disinformation and emphasize the risk of confusion about what is real and fake.

We conducted Welch’s ANOVA to explore whether the surveyed experts agreed on the risks they believe are posed by AI-generated disinformation by having them rate six statements on a 7-point Likert scale, ranging from “Strongly disagree” to “Strongly agree” (see Figure 2). We could not find significant differences for most variables, demonstrating strong alignment overall; thus, we pooled participants’ responses. Ninety percent of experts agreed that AI-generated disinformation will cause confusion about what is real and fake, with 45% agreeing strongly. In addition, there was agreement that it will undermine democratic debate, indicated by 69% of the sample, who answered above ”Neither agree nor disagree” on the 7-point scale. When asked about the extent to which these risks may be overstated (Simon et al., 2023), there was more balance in the responses. While most agreed, 33% disagreed (lower than 4 on the 7-point scale) that AI-generated disinformation is part of moral panics about new technologies, and 25% disagreed (lower than 4 on the 7-point scale) that it is simply a variation of long-standing issues surrounding mis- and disinformation. When asked whether AI-generated disinformation will disrupt political decision-making, this question had the highest percentage of neutral responses, with 32% neither agreeing nor disagreeing. These findings are reported in the stacked bar plot below (see Figure 2).

Only one test indicated a significant difference across groups, specifically regarding the risk that people will stop believing in anything they see, F(2, 46.44) = 5.98, p = .005, which journalists (M = 5.81, SD = 0.98) rated significantly higher than both academics (M = 4.85, SD = 1.49, p = .015) and fact checkers (M = 4.62, SD = 1.66, p = .011). No significant difference was found between academics and fact checkers (p = .814).

Finding 3: Experts have differing views when asked who is responsible for countering AI-generated disinformation; fact checkers attribute more responsibility to online platforms compared to academics, who in turn hold news users more responsible.

We explored whether the expert groups attributed the responsibility for countering AI-generated disinformation and its societal impact differently. This was partially the case. While there was high consensus that very large online platforms are accountable (62% strongly agreed), fact checkers (M = 6.69, SD = .60) on average agreed significantly more than academics (M = 6.23, SD = .89); p = .026. In addition, fact checkers (M = 6.07, SD = .99) agreed significantly more that fact checkers (i.e., themselves) are responsible when compared to academics (M = 5.38, SD = 1.21); p = .025. Regarding news users, academics (M = 4.74, SD = 1.39) view those as significantly more responsible compared to fact checkers (M = 3.62, SD = 1.54); p = 0.006, but not compared to journalists (M = 4.06, SD = 1.73). We did not find any significant group differences regarding the attribution of responsibility towards journalists, political actors, or academics. Figure 3 visualizes agreement with the questions for all surveyed experts combined.

Methods

To explore expert perceptions regarding generative AI, we conducted an online survey (N = 92) among academics (n = 47), fact checkers (n = 29), and journalists (n = 16) who work towards mitigating disinformation. Participants were drawn from the European Digital Media Observatory (EDMO), a hub co-founded by the European Commission. As members of this network, we leveraged our access to its mailing lists, such as the fact-checking group and various hub-specific lists, as well as personal contacts, to distribute the survey and increase diversity in the sample. Data collection took place between January and May 2025 to ensure broad participation and maximize response rates. Recruiting journalists required additional efforts due to the small pool of this expert group specializing in disinformation. Ethical approval was obtained from the University of Amsterdam (FMG-11584).

Participants reported basic demographic information (gender: female: n = 32,male: n = 59, other: n = 1; age: M = 40.93, SD = 11.63; education level: bachelor’s degree: n = 11, master’s degree: n = 43, PhD: n = 38), indicated the percentage of their work that focused on topics related to disinformation (M = 65.45, SD = 30.71) and how many years of experience they had working on the topic (M = 7.51, SD = 5.76). Respondents were asked to indicate which job descriptions most accurately reflected their current role. One participant indicated in an open answer field that they had multiple roles equally, so they were excluded from the analysis. To ensure participants had a shared understanding, we presented them with a definition of disinformation, namely: “content that (1) contains verifiably false or misleading information, (2) has the potential to cause societal harm, (3) is intentionally disseminated, and (4) serves possible economic or political objectives” (Bleyer-Simon & Reviglio, 2024, p. 3).

Perceived competence in dealing with AI-generated disinformation (M = 5.49, SD = 0.98, α = .77) was assessed by asking, on a 7-point Likert scale, the extent to which participants were confident in (1) their ability to detect disinformation effectively, (2) their knowledge and skills to conduct work around disinformation, and (3) their ability to distinguish between true and false or misleading information (see Lecheler et al., 2024).

Perceived risks posed by AI- generated disinformation were measured using six items on 7-point Likert scales. These items were inspired by previous research, including studies formulating concrete scenarios of potential risks posed by AI-generated disinformation (Chesney & Citron, 2019) and research suggesting that such risks may be overstated (Simon et al., 2023). As these items do not constitute a scale, no means, standard deviations, or Cronbach’s alphas are reported.

Attribution of responsibility for addressing AI- generated disinformation was measured by asking participants, on a 7-point scale, to what extent they believed the following actors were responsible for countering AI-generated disinformation: (1) very large online platforms (e.g., Meta, Google, X, TikTok), (2) journalists working for legacy media, (3) independent fact checkers, (4) governments and political actors, (5) independent experts and academics, and (6) news users. Again, no scale was constructed for this variable.

In addition to providing descriptive statistics, we conducted significance tests to explore differences between expert groups. Given the relatively small sample size and uneven group distributions, we relied on the more conservative Welch’s ANOVA combined with a Games-Howell post hoc test (see Delacre et al., 2019). We also conducted non-parametric tests as a robustness check, as reported in the Appendix.

Topics

Bibliography

Adami, M. (2024, March 15). How AI-generated disinformation might impact this year’s elections and how journalists should report on it. Reuters Institute. https://reutersinstitute.politics.ox.ac.uk/news/how-ai-generated-disinformation-might-impact-years-elections-and-how-journalists-should-report

Altay, S., Berriche, M., Heuer, H., Farkas, J., & Rathje, S. (2023). A survey of expert views on misinformation: Definitions, determinants, solutions, and future of the field. Harvard Kennedy School (HKS) Misinformation Review, 4(4). https://doi.org/10.37016/mr-2020-119

Bleyer-Simon, K., & Reviglio, U. (2024). Defining disinformation across EU and VLOP policies. European Digital Media Observatory. https://edmo.eu/wp-content/uploads/2024/10/EDMO-Report-%E2%80%93-Defining-Disinformation-across-EU-and-VLOP-Policies.pdf

Bulger, M., & Davison, P. (2018). The promises, challenges, and futures of media literacy. Journal of Media Literacy Education, 10(1), 1–21. https://doi.org/10.23860/JMLE-2018-10-1-1

Chan, Y., & Walmsley, R. P. (1997). Learning and understanding the Kruskal-Wallis one-way analysis-of-variance-by-ranks test for differences among three or more independent groups. Physical Therapy, 77(12), 1755–1761. https://doi.org/10.1093/ptj/77.12.1755

Chesney, B., & Citron, D. (2019). Deep fakes: A looming challenge for privacy, democracy, and national security. California Law Review, 107(6), 1753–1820. https://doi.org/10.15779/Z38RV0D15J

Chystoforova, K., & Reviglio, U. (2025). Framing the role of experts in platform governance: Negotiating the code of practice on disinformation as a case study. Internet Policy Review, 14(1). https://doi.org/10.14763/2025.1.1823

Delacre, M., Leys, C., Mora, Y. L., & Lakens, D. (2019). Taking parametric assumptions seriously: Arguments for the use of Welch’s F-test instead of the classical F-test in one-way ANOVA. International Review of Social Psychology, 32(1), 1–12. https://doi.org/10.5334/irsp.198

Gosse, C., & Burkell, J. (2020). Politics and porn: How news media characterizes problems presented by deepfakes. Critical Studies in Media Communication, 37(5), 497–511. https://doi.org/10.1080/15295036.2020.1832697

Graves, L., & Mantzarlis, A. (2020). Amid political spin and online misinformation, fact checking adapts. The Political Quarterly, 91(3), 585–591. https://doi.org/10.1111/1467-923X.12896

Gregory, S. (2021). Deepfakes, misinformation and disinformation and authenticity infrastructure responses: Impacts on frontline witnessing, distant witnessing, and civic journalism. Journalism, 23(3), 708–729. https://doi.org/10.1177/14648849211060644

Griffin, R. (2025). The politics of risk in the Digital Services Act: A stakeholder mapping and research agenda. Weizenbaum Journal of the Digital Society, 5(2). https://doi.org/10.34669/wi.wjds/5.2.6

Hopp, T. (2021). Fake news self-efficacy, fake news identification, and content sharing on Facebook.

Journal of Information Technology and Politics, 19(2), 229–252. https://doi.org/10.1080/19331681.2021.1962778

Kruger, A., Saletta, M., Ahmad, A., & Howe, P. (2024). Structured expert elicitation on disinformation, misinformation, and malign influence: Barriers, strategies, and opportunities. Harvard Kennedy School (HKS) Misinformation Review, 5(7). https://doi.org/10.37016/mr-2020-169

Lecheler, S., Gattermann, K., & Aaldering, L. (2024). Disinformation and the Brussels bubble: EU correspondents’ concerns and competences in a digital age. Journalism, 25(8), 1736–1753. https://doi.org/10.1177/14648849231188259

Sauder, D. C., & DeMars, C. E. (2019). An updated recommendation for multiple comparisons. Advances in Methods and Practices in Psychological Science, 2(1), 26–44. https://doi.org/10.1177/2515245918808784

Simon, F. M., Altay, S., & Mercier, H. (2023). Misinformation reloaded? Fears about the impact of generative AI on misinformation are overblown. Harvard Kennedy School (HKS) Misinformation Review, 4(5). https://doi.org/10.37016/mr-2020-127

Tully, M., Madrid-Morales, D., Wasserman, H., Gondwe, G., & Ireri, K. (2022). Who is responsible for stopping the spread of misinformation? Examining audience perceptions of responsibilities and responses in six sub-Saharan African countries. Digital Journalism, 10(5), 679–697. https://doi.org/10.1080/21670811.2021.1965491

van Huijstee, M., van Boheemen, P., Das, D., Nierling, L., Jahnel, J., Karaboga, M., & Fatun, M. (2021). Tackling deepfakes in European policy. Publications Office of the European Union. https://doi.org/10.2861/325063

Vinhas, O., & Bastos, M. (2025). When fact-checking is not WEIRD: Negotiating consensus outside Western, educated, industrialized, rich, and democratic countries. The International Journal of Press/Politics, 30(1), 256–276. https://doi.org/10.1177/19401612231221801

Wahl-Jorgensen, K., & Carlson, M. (2021). Conjecturing fearful futures: Journalistic discourses on deepfakes. Journalism Practice, 15(6), 803–820. https://doi.org/10.1080/17512786.2021.1908838

Weikmann, T., & Lecheler, S. (2023). Cutting through the hype: Understanding the implications of deepfakes for the fact-checking actor-network. Digital Journalism, 12(10), 1–18. https://doi.org/10.1080/21670811.2023.2194665

Weikmann, T., Greber, H., & Nikolaou, A. (2025). After deception: How falling for a deepfake affects the way we see, hear, and experience media. The International Journal of Press/Politics, 30(1), 187–210. https://doi.org/10.1177/19401612241233539

Whyte, C. (2020). Deepfake news: AI-enabled disinformation as a multi-level public policy challenge. Journal of Cyber Policy, 5(2), 119–217. https://doi.org/10.1080/23738871.2020.1797135

Funding

This project has received co-funding from the European Union under Grant Agreement number 101158277-BENEDMO-DIGITAL-2023-DEPLOY-04.

Competing Interests

All authors are members of the expert network surveyed in this study, but did not partake in it themselves. This affiliation did not influence the study’s design, data collection, analysis, or interpretation of results.

Ethics

This study was approved by the University of Amsterdam’s Institutional Review Board (FMG-11584). Informed consent was received from all subjects.

Copyright

This is an open access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided that the original author and source are properly credited.

Data Availability

All materials needed to replicate this study are made available via the Harvard Dataverse: https://doi.org/10.7910/DVN/IO8HS7